Pin the maturity cards on the wall. Ask how well do we foresee the change we’re going to inflict with the planned features. “Customers will love it”, the engineer proclaims putting a few features on tree level. The sales rep sourly adds: “If they’ll ever grasp it”, moving them up into the clouds.

A first maturity tool on our list are the fields of expertise. Experts tend to rate the maturity according to their field of expertise, with different results. Made explicit, the differences can be discussed.

1) Rate maturity per field of expertise

Here are possible interpretation of the maturity levels per field of expertise:

- Market (M): How sure are the market experts, that the thing will earn enough money so the investment pays off? Stars: cool idea; clouds: there is a claim, a first business case, a promising spot on the market; trees: product positioning defined; road: gathering in-market feedback.

- Experience (X): How sure are the experience experts, that the customers have a great time when using the products? Stars: why not; clouds: there are a few solutions we can choose from; trees: we can show that customers love to use it; roads: collecting market feedback.

- Domain (D): How sure are the domain experts, that the thing will actually create a benefit for customers? Stars: just a wild guess; clouds: some estimates based on laboratory prototypes or simulations; trees: some estimates based on a prototype; road: some estimates and even measurements from usage.

- Technology (T): How sure are the technology experts, that they can do it with reasonable costs? Stars: no idea; clouds: quite confident that it can be built; trees: how to do it roughly agreed; road: built.

- Business / Strategy (B): How sure are the business experts, that this is the right thing to invest in? Stars: come back later, clouds: great thing, go ahead and invest more; trees: that fits into the overall picture; road: done deal.

- Corporate Abilities (A): How sure are the stakeholders, that the thing can be developed, produced, distributed, sold, supported and more. Stars: no idea, we’ll see later; clouds: could work; trees: We have a plan and the resources to do it; roads: we’re operational.

To make one thing clear: discussing is the important thing. And it’s really worth investing some time to properly phrase, what prevents the rating to get closer to road per each field of expertise. If this rationale is recorded, the team knows what to do next.

The fields you need depend on your situation. In the illustration above, stakeholder commitment (SH) and intellectual property (IP) have been added. In another situation standard compliance (C) or safety (S) might be topics important enough to rate them on the highest level.

2) Discuss the reasons and record them

From this discussion, deriving the overall maturity is as easy as that: take the weakest link. If the market is on clouds level and technology is already moving from tree to road then technologist might argue that the product is almost finished. But as the uncertainty of the market makes it quite likely, that a lot of changes to the technology have to be made, this perception of being almost on the road is obviously misleading.

3) The weakest link defines the overall maturity

Now having agreed on the overall maturity level, it’s time to agree upon next steps. That’s what the rationale is most helpful for. it points out what prevents the rating of being close to road. As a rule of thumb, start with the weakest link.

4) Agree on the next steps

The maturity rating can be done for the whole package delivered by a project. This gives the team and the stakeholders a good big picture on how to proceed: From stars to cloud, the most promising steps are exploratory research, simulations, mocks and throw-away prototypes that allow to quickly resolve the major questions. From clouds to trees, much things happen on choosing what exactly to do and how to do it best. It’s time to agree on and proof the chosen solution. From tree to road most of the development works happen.

5) Break it up to feature level

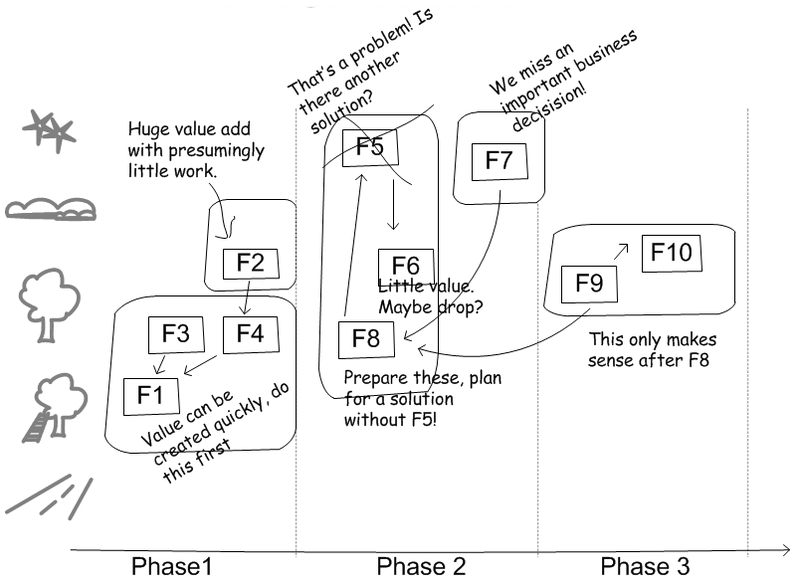

It’s also a good idea to rate the individual features – we assume that features are high-level requirements or epics in agile lingo – developed by a project. This allows to play a very interesting game: when do we start working on the cloudy features and when on those on tree level? What does it take for a feature to mature further? Do we implement a cloudy feature as defined or do we implement a slightly altered version that is already on tree level?

The worksheet above, e.g. done with sticky notes on a white board, illustrates the process and the result of such a release planning.

The last tool we propose in this post is maturity profiling. Here is an example. It would look great mounted on the wall right besides the product backlog.

The sketch above shows the maturity ratings at three consecutive point in times of the same project. The blue circled features were the ones the team committed to work on next. What do you think about the project’s progress? What about the fact that the project has features in the stars? Should projects have such items at all? What about F9 that when working on it more turned out to be on star level rather than cloud level? What about the most recent state, where the features are either on road level or high up and only F3 is ready for development?

What is really interesting is comparing different projects, how their profile changes over time and why. We’re looking forward to hear from you about emerging patterns!

As a concluding remark: Here are three tools a product owner can use that tie in very well with agile development. And other than the destructive approach of managing project risk, these tools constantly asks, what to do next in order to make items more mature. The levels of maturity even give hints on when to start developing the real stuff and when to use quick models to answer questions, as this discussion about planning poker shows.

Pingback: Prioritizing backlog items | from stars to road

The fields of expertise are drawn from product innovation view point. Maturity can be rated from many different viewpoints, it just needs different fields. When e.g. looking from the point of view of an investor, e.g. a venture capitalist, fields could be something like this:

– Offer: things like products and product portfolio; features, costs, application.

– Market: value generation, i.e. perceived benefit, market segment, market penetration; value capturing, i.e. value chain, pricing, price expectations; market regulations.

– Team: The faces behind, their involvement, their strength and their dedication.

– Execution: things like finances, IP, innovation and manufacturing processes, sales setup

An Investor will also need to have another field:

– Exit: When, and how to take out the money, with profit of course.

Here’s an excerpt from a conversation held in or coporate yammer tool:

A: “‘Field studies, user observations, contextual analyses, and all procedures which aim at determining true human needs are still just as important as ever, but they should all be done outside of the product process. This is the information needed to determine what product to build, which projects to fund. Do not insist on doing them after the project has been initiated. Then it is too late, then you are holding everyone back.’ Interesting article by Don Norman. Hmm, so how does this fit with Zühlke’s way (Stars to Road / UX / Innovation)? When to advocate [..] user research in RUP? In Inception? Or Elaboration? Or is the elaboration phase perhaps already too late? Opinions?”

Markus: “[..] User Research can take place at any time within the [stars to road] framework. [..] Go and see users to identify stars. [..] From stars to cloud: Dig into to specifics of the idea you explore [..] From cloud to tree and to road: user research to dig into details not understood yet [..].

for RUP: [..] An organisation could setup an inception phase to get an insight via stars to a vision in the clouds. The company would then choose from several RUP visions [..] and start the product development projects (Elaboration to Transition) that brings the idea from cloud to road. [..] we did projects where the customer came with functional specs of what he wanted us to build. We still did run inception and elaboration first (to answer how to build it and prepare construction). In this case however, if the customer didn’t do a good job in understanding the users, it was awfully difficult to do user research, as it always questions a lot of the customers work done. In this case, inception and elabroation of our project bring the idea just from cloud to tree level. The way from insight to stars to cloud is done prior to the project.

[..] It is not inception or elaboration that counts where to put user research but the maturity of the idea. [.. Especially] if the idea is unbalanced in maturity, e.g. Technology on tree, business on tree, but user experience on stars because nobody cared so far to do user research, it may be really too late to do user research [..]”

A: “[..] I think we might have roughly had the situation you describe [..] my efforts to do user research in elaboration have been met with the reaction described by Don Norman (“Then it is too late, then you are holding everyone back.”). [..] The situation at my client _overall_ is roughly: Technology is on tree level, market is on tree level, business / strategy is on tree level, but Experience (user research!) in the stars, Domain (requirements engineering!) in the stars, Corporate Abilities (business process analysis!) in the stars. According to the advice in your blog post, we should have focussed our efforts much more on the three “weakest links”, ie. UX, RE, and BPA.

Also, as in your blog post, we have certain feature areas which are already on tree level, but other feature areas that are on stars or cloud level. I think the ‘maturity rating’ tool you describe in your blog post might help us in the upcoming release planning, for the different projects within the program.”